0

29.01.2020

Dear reader,

Welcome to the 5th edition of the UPTIME Newsletter. The UPTIME project is in its final year, with the prototype of the UPTIME Platform currently undergoing its final configuration and integration into the three industrial business cases. In this edition, our partner Whirlpool shares their first-hand experience of the implementation of the UPTIME Platform in their complex automatic white goods production line, which produces drums for clothes dryers, as well as some lessons learned and practical guidelines for manufacturers to get started with Predictive Maintenance.

The prototypes of UPTIME_ANALYZE and UPTIME_FMECA, another two of the core technical components of the UPTIME Platform, have been validated in the Whirlpool Business Case and their main features and functionalities are introduced in this newsletter.

Moreover, I am pleased to inform you that the UPTIME Platform will be brought closer to you and showcased at the upcoming “Hannover Messe” which will take place from 20 – 24 April 2020. At this occasion, we would like to invite you to visit our booth, which is located at the Predictive Maintenance Pavilion in Hall 16.

During this fair, we will have several showcase sessions at the booth presenting the features of the UPTIME Platform and demonstrating their implementation in our industrial use cases. More details about the UPTIME Showcase at the Hannover Fair will be made available on our website soon. We hope to meet you there and we look forward to exchanging ideas and getting your valuable feedback.

Besides a physical demonstration, we also plan to organise a series of live webinars to demonstrate the UPTIME Platform. The first webinar, titled “UPTIME Predictive Maintenance: Lessons Learned and Best Practices in White Goods Industry“, will take place on Thursday, 19 March 2020, 11:00 – 12:30 CET. For registration and more detailed information about the webinar, please visit here.

We hope you enjoy our newsletter!

The UPTIME Platform consists of six main components, addressing various phases of the unified predictive maintenance approach. In our previous newsletters released in May and September 2019, we introduced the component UPTIME_DECIDE for maintenance decision-making and action planning, UPTIME_SENSE for data acquisition and manipulation, and UPTIME_DETECT & _PREDICT for stream data analytics. In this edition, we present the component UPTIME_ANALYZE for batch data analytics and UPTIME_FMECA for risk assessment.

UPTIME_ANALYZE is a data-at-rest analytics engine driven by the need to leverage manufacturers’ legacy data and operational data related to maintenance, and to extract and correlate relevant knowledge. It is mainly addressed to manufacturers’ maintenance teams, including maintenance managers and factory workers, who need to gain insights on what happened in the past (for historical data) and what is happening at the moment or the near past/future (for operational data).

Key advantages of UPTIME_ANALYZE include:

• Extract & correlate relevant knowledge from legacy & operational data

• Address the cold start problem with some initial predictions for predictive maintenance even when adequate sensor data are not yet available

• Facilitate interfacing with 3rd party systems already deployed in a manufacturing site in order to obtain additional maintenance-related data

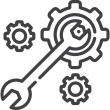

UPTIME_ANALYZE allows a manufacturer to upload different datasets that have been extracted from the legacy and operational systems (Data Uplifting Layer). In order to ensure that the datasets provided fall within the scope of predictive maintenance, they are mapped at a semantic and syntactic level to the UPTIME Predictive Maintenance Data Model in a semi-automatic manner (Data Curation, Matching and Transformation Layer)

The data as well as the mapping created are stored, allowing the dataset’s transitioning to the “pre-processed” status (Data Storage Layer). Afterwards, two parallel processes start in the Data Mining & Analytics Layer, which practically delivers the intelligence of the UPTIME_ANALYZE component by defining, training, executing and experimenting with different machine learning algorithms.

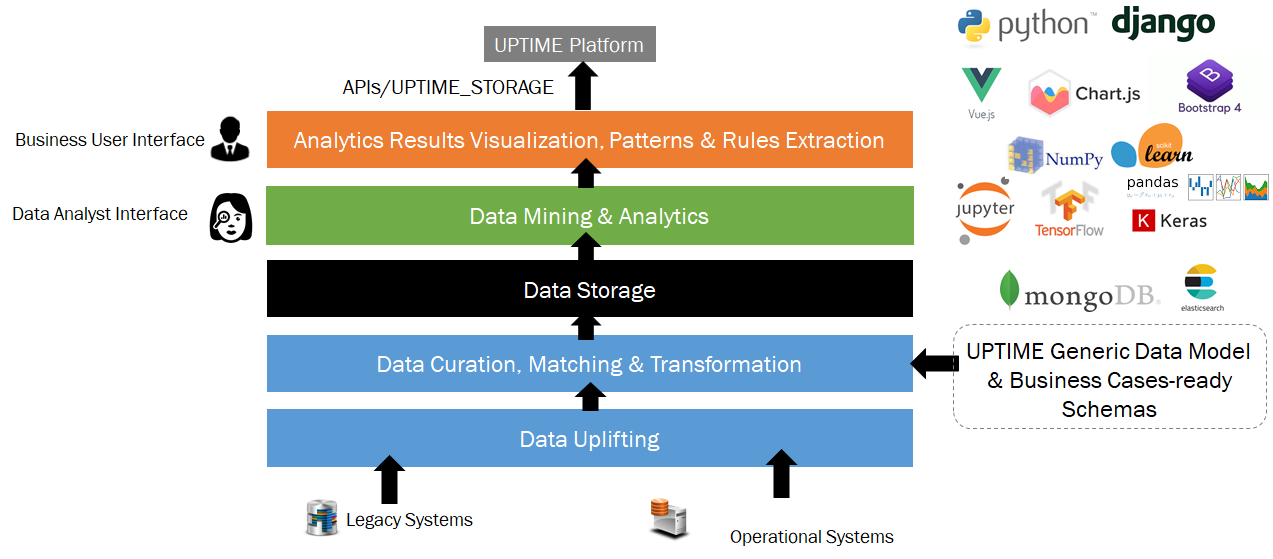

1.) The data analyst has the dataset at his/her disposal and may experiment with the different machine learning algorithms already provided in UPTIME_ANALYZE in the dedicated Data Analyst Interface (e.g. LSTM (Long Short Term Memory) neural network for Time Series Prediction, Kohonen’s Self-Organizing Maps (SOMs) for Dimensionality Reduction, SVM and Random Forest for Classification).

2.) With the help of intuitive and interactive visualizations in the Business User Interface, the business user can obtain a quick understanding of the uplifted data.

As soon as the analysis performed on the dataset by the data analyst concludes, in the Analytics Results Visualization, Patterns and Rules Extraction Layer, the results are appropriately communicated from the Data Analyst Interface to the Business User Interface. In this way, the business users shall have at their disposal and may also navigate to the outcomes of the machine learning analysis in an intuitive manner in their own interface, as depicted above.

If you are interested in a possible deployment of UPTIME_ANALYZE in your context, please feel free to contact us.

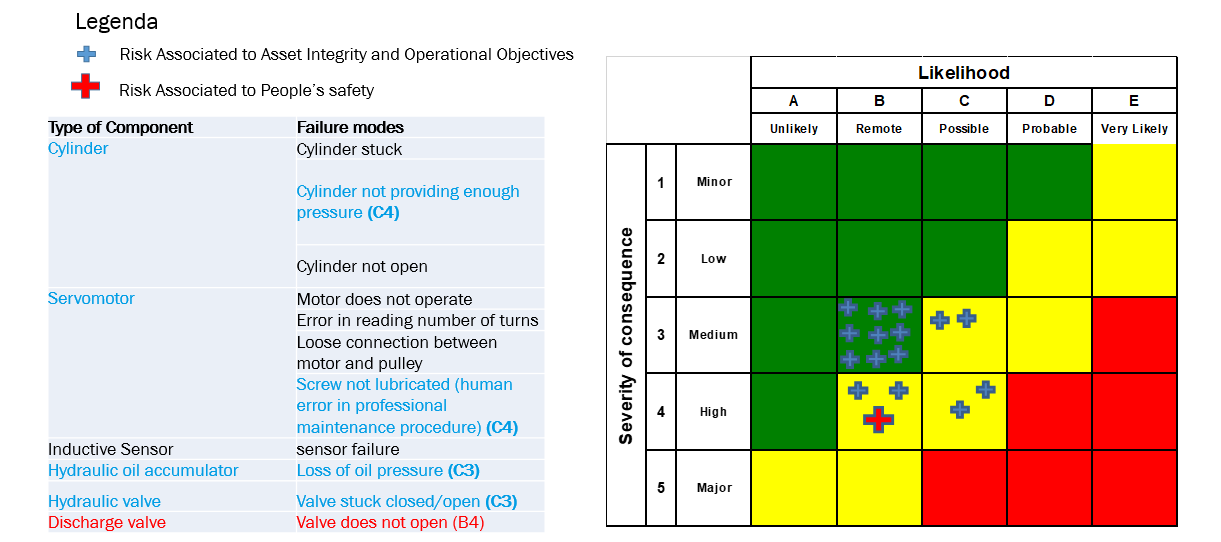

Failure Modes Effects and Criticality Analysis (FMECA) is one of the analytical approaches to system design that is used to assess failure impacts. UPTIME_FMECA adds definition and estimation of the occurrence probability of each failure mode that can occur in a system and provides an evaluation of its consequences.

UPTIME_FMECA starts from the identification of the failure modes (i.e. how something can break down or fail) associated to each component of an equipment and analyse the impact of such failures on the whole system according to its physical and logical design. Failure occurrence probability is then estimated on the basis of simulations or historical records. The combination of these two elements gives feedback on the reliability of the chosen design identifying weak elements for which improvements are needed.

Key advantages of UPTIME_FMECA include:

• a systematic and standard approach to identify failure modes and analyse risks in the design of a system creating a knowledge base

• allow for synergies among safety and cyber security by extending the concept of FMECA analysis to intentional action (i.e. cybersecurity threats) and operator errors

• potential failure modes and risk analysis in the digital twin of a system

The main objective of UPTIME_FMECA analysis is to reduce the consequences of critical failures. This is applicable not only to physical systems but also to any processes. Effects to be evaluated go from local effect (i.e. related to the component itself and its functionality) to impacts on asset and production, people’s safety, environment and company’s reputation, including potential domino effects.

For implementation in the Whirlpool Business Case, FMECA analysis on the Punching Bench Station that belongs to a new drums production line of Whirlpool is conducted. The analysis is performed in three main steps:

1. Carry out an FMECA analysis at Design Level

2. Identify physical measures associated to most critical components

3. Model the relation between physical measures and failure rate

According to the preliminary results of the FMECA analysis, a simplified model of the equipment system is developed, and new physical measures are identified. If you are interested in a possible deployment of UPTIME_FMECA in your context, please feel free to contact us.

Implementation of UPTIME Platform in the Whirlpool Business Case

The UPTIME Platform is deployed in and validated against three industrial use cases: (1) production and logistics systems in the aviation sector, (2) white goods production line and (3) cold rolling for steel straps.

In this 5th edition, Pierluigi Petrali, Manager of Manufacturing R&D of Whirlpool Corporation based in Italy, shares with us his first-hand experience and some lessons learned from the recent implementation of the UPTIME Platform in the Whirlpool Business Case and provide some practical guidelines for manufacturers to get started with Predictive Maintenance.

What have been achieved so far after the first implementation phase of the UPTIME Platform in the Whirlpool Case?

In the first phase, we mainly concentrated our efforts in the implementation of analytical tool provided by the component UPTIME_ANALYZE. A broad spectrum of data are made available to UPTIME_ANALYZE and many different algorithms are evaluated in order to understand which are the best algorithms to detect the health status of our equipment. Moreover, UPTIME_FMECA analysis is performed, with main objective to address the monitoring of physical measures by means of sensors that could contribute to failure modes or causes prognosis and failure rate estimation. As a result, additional equipment sensors are installed in the Whirlpool drum production line.

What are the main challenges in the development and implementation phase and some lessons learned so far?

During the first implementation phase, we realized that finding the relevant piece of information hidden in large amounts of data turned out to be more difficult than initially thought.

One of the main learnings is that Data quality needs to be ensured from the beginning of the process. This implies spending some more time, effort and money to carefully select the sensor type, data format, tags, and correlating information. This turns to be particular true when dealing with human-generated data. It means that if the activity of input of data from operators is felt as not useful, time consuming, boring and out of scope, this will inevitably bring bad data.

Quantity of data is another important aspect as well. A stable and controlled process has less variation. Thus, machine learning requires large sets of data to yield accurate results. Also this aspect of data collection needs to be designed for example some months, even years in advance, before the real need emerges.

This experience turns out into some simple, even counterintuitive guidelines:

1. Anticipate the installation of sensors and data gathering. The best way is doing it during the first installation of the equipment or at its first revamp activity. Don’t underestimate the amount of data you will need, in order to improve a good machine learning. This of course needs also to provide economic justification, since the investment in new sensors and data storing will find payback after some years.

2. Gather more data than needed.

A common practice advice is to design a data gathering campaign starting from the current need. This could lead though to missing the right data history when a future need emerges. In an ideal state of infinite capacity, the data gathering activities should be able to capture all the ontological description of the system under design. Of course, this could not be feasible in all real-life situations, but a good strategy could be populating the machine with as much sensors as possible.

3. Start initiatives to preserve and improve the current datasets, even if not immediately needed. For example, start migrating Excel files distributed across different PCs into common shared databases, taking care of making a good data cleaning and normalization (for example, converting local languages descriptions in data and metadata to English).Finally, the third important learning is that Data Scientists and Process Experts still don’t talk the same language and it takes significant time and effort from mediators to help them communicate properly. This is also an aspect that needs to be taken into account and carefully planned. Companies need definitely to close the “skills” gaps and there are different strategies applicable:

train Process Experts on data science;

train Data Scientists on subject matter;

develop a new role of Mediators, which stays in between and shares a minimum common ground to enable clear communication in extreme cases.

What are the next steps of the project for the Whirlpool Business Case?

In this second phase Whirlpool is planning to further enrich the dataset with newly installed sensors, to continue the evaluation of machine learning algorithms and to build basic rules to establish health status of the equipment.

Pierlugi Petrali will speak about predictive maintenance in the White Goods industry and the implementation of UPTIME Platform in more details in the upcoming Webinar. If you are intersted, please feel free to register here.

UPTIME 1st Webinar “Predictive Maintenance: Lessons Learned & Best Practices in the White Goods Industry” will be held on 19th March 2020, from 11:00 to 12:30 CET. We are eager to have the opportunity to present you the UPTIME Predictive Maintenance Platform and the implementation in the Whirlpool business case. This webinar is free of charge, dedicated to people, who want to learn and see a concrete implementation of our Predictive Maintenance Platform in a real business case. The webinar will be interactive, where you will have the opportunity to ask questions to the experts panel and we will be happy to receive your feedback. For more details about the webinar, please visit here. If you have any questions or comments, please contact us.

UPTIME with other ForeSee Cluster projects, SERENA and Z-BRE4K jointly co-organised an international workshop on “Interoperability for Maintenance: Semantic Model, Terminology and Ontology” in conjunction with the I-ESA 2020 conference, which will be held in Tarbes, France. The workshop will take place from 24 – 25 March 2020.

The workshop will focus on outcomes of the projects implementing various advanced technologies to improve interoperability in the production systems and plants as well as definition of a structured roadmap with trends for future advanced maintenance processes. Call for papers and further information: Interop-maintenance-2020

The second international workshop on Key Enabling Technologies for Digital Factories (Ket4DF) will be held in Grenoble, France from 8-9 June 2020. It is organised in conjunction with the 32nd International Conference on Advanced Information Systems Engineering (CAiSE) 2020 and supported by 6 EU projects: UPTIME, FIRST, QU4LITY, BOOST 4.0, Z-BRE4k, DIH4CP.

The workshop will focus on technologies for Industry 4.0, with specific reference to digital factories and smart manufacturing.

The deadline for paper submission is 1 March 2020. For call for papers and further information, please visit: KET4DF2020

08 – 12 June 2020

Grenoble, France